Research on hardware security and infrastructure-level mechanisms for monitoring and securing AI development and deployment — including side-channel analysis, cluster security, and physical-layer verification.

Central evaluation examples include:

- CodeSignal test

- Paper analysis/critique

- ML technical test

Eliciting Harmful Capabilities by Fine-Tuning on Safeguarded Outputs

Model developers implement safeguards in frontier models to prevent misuse, for example, by employing classifiers to filter dangerous outputs. In this work, we demonstrate that even robustly safeguarded models can be used to elicit harmful capabilities in open-source models through elicitation attacks. Our elicitation attacks consist of three stages: (i) constructing prompts in adjacent domains to a target harmful task that do not request dangerous information; (ii) obtaining responses to these prompts from safeguarded frontier models; (iii) fine-tuning open-source models on these prompt-output pairs. Since the requested prompts cannot be used to directly cause harm, they are not refused by frontier model safeguards. We evaluate these elicitation attacks within the domain of hazardous chemical synthesis and processing, and demonstrate that our attacks recover approximately 40% of the capability gap between the base open-source model and an unrestricted frontier model. We then show that the efficacy of elicitation attacks scales with the capability of the frontier model and the amount of generated fine-tuning data. Our work demonstrates the challenge of mitigating ecosystem level risks with output-level safeguards.

Authors:

Jackson Kaunismaa

Date:

May 7, 2026

Claudini: Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLMs

LLM agents like Claude Code can not only write code but also be used for autonomous AI research and engineering. We show that an autoresearch-style pipeline powered by Claude Code discovers novel white-box adversarial attack algorithms that significantly outperform all existing (30+) methods in jailbreaking and prompt injection evaluations.

Starting from existing attack implementations, such as GCG, the agent iterates to produce new algorithms achieving up to 40% attack success rate on CBRN queries against GPT-OSS-Safeguard-20B, compared to ≤10% for existing algorithms. The discovered algorithms generalize: attacks optimized on surrogate models transfer directly to held-out models, achieving 100% ASR against Meta-SecAlign-70B versus 56% for the best baseline. Extending the findings of AutoAdvExBench, our results are an early demonstration that incremental safety and security research can be automated using LLM agents. White-box adversarial red-teaming is particularly well-suited for this: existing methods provide strong starting points, and the optimization objective yields dense, quantitative feedback. We release all discovered attacks alongside baseline implementations and evaluation code at this https URL.

Authors:

Alexander Panfilov

Date:

May 7, 2026

Distillation Robustifies Unlearning

Current LLM unlearning methods are not robust. A few steps of finetuning can revert their effects. We begin by showing that this is true even for an idealized form of unlearning: training to imitate a model that was never trained on unwanted information. This shows that training a model can drastically modify its input-output behavior while leaving its underlying capabilities intact. In light of this dynamic, we show our main result. Training a randomly initialized student on the outputs of an unlearned model transfers behaviors while leaving latent capabilities behind. In short, distillation robustifies unlearning. Based on this result, we propose Unlearn-Noise-Distill-on-Outputs (UNDO), a scalable method that distills an unlearned model into a noised copy of itself. UNDO introduces a tunable tradeoff between compute cost and robustness, establishing a new Pareto frontier on synthetic language and arithmetic tasks. At its strongest setting, UNDO matches the robustness of a model retrained from scratch with perfect data filtering while using only 60-80% of the compute and requiring only 0.01% of the pretraining data to be labeled. We also show that UNDO robustifies unlearning on the more realistic Weapons of Mass Destruction Proxy (WMDP) benchmark. Since distillation is widely used in practice, incorporating an unlearning step beforehand offers a convenient path to robust capability removal.

Authors:

Adeline (Addie) Foote, Alexander Infanger, Eleni Shor, Harish Kamath, Jacob Goldman-Wetzler

Date:

May 7, 2026

The WMDP Benchmark: Measuring and Reducing Malicious Use With Unlearning

The White House Executive Order on Artificial Intelligence highlights the risks of large language models (LLMs) empowering malicious actors in developing biological, cyber, and chemical weapons. To measure these risks of malicious use, government institutions and major AI labs are developing evaluations for hazardous capabilities in LLMs. However, current evaluations are private, preventing further research into mitigating risk. Furthermore, they focus on only a few, highly specific pathways for malicious use. To fill these gaps, we publicly release the Weapons of Mass Destruction Proxy (WMDP) benchmark, a dataset of 3,668 multiple-choice questions that serve as a proxy measurement of hazardous knowledge in biosecurity, cybersecurity, and chemical security. WMDP was developed by a consortium of academics and technical consultants, and was stringently filtered to eliminate sensitive information prior to public release. WMDP serves two roles: first, as an evaluation for hazardous knowledge in LLMs, and second, as a benchmark for unlearning methods to remove such hazardous knowledge. To guide progress on unlearning, we develop RMU, a state-of-the-art unlearning method based on controlling model representations. RMU reduces model performance on WMDP while maintaining general capabilities in areas such as biology and computer science, suggesting that unlearning may be a concrete path towards reducing malicious use from LLMs. We release our benchmark and code publicly at https://wmdp.ai

Authors:

Annah Dombrowski

Date:

May 7, 2026

Resisting RL Elicitation of Biosecurity Capabilities: Reasoning Models Exploration Hacking on WMDP

As frontier reasoning models become more capable, accurate dangerous capability evaluation is becoming essential for risk estimation and governance. Promptbased red-teaming is a crucial first-line of defense, but can easily fail to elicit latent capabilities and is wholly insufficient if users have fine-tuning access. Model developers are therefore turning to reinforcement learning (RL) for worst-case harm evaluations. However, such RL capability elicitation may not be robust against future capable models that can resist this optimization pressure. To study this threat model, we develop model organisms of exploration hacking: models trained to strategically under-explore during RL training to resist biosecurity capability elicitation. Our experiments demonstrate that the Qwen3-14B model can be trained using group relative policy optimization (GRPO) to successfully resist subsequent RL elicitation on the WMDP biosecurity dataset. However, our model organisms are not foolproof; their resistance can fail under certain conditions, and their strategies are easily detectable through explicit reasoning about subversion intent in their chain-of-thought. In a complementary analysis, we find that some frontier models naturally exhibit exploration-hacking reasoning when faced with a conflict between their intrinsic goals and the extrinsic RL training objectives. Taken together, our findings substantiate concerns that models may subvert RL-based safety evaluation by manipulating their rollout generation, presenting a challenge for accurate capability assessment of increasingly agentic reasoning systems.

Authors:

Joschka Braun, Damon Falck, Yeonwoo Jang

Date:

April 23, 2026

Frequently asked questions

The MATS Program is a 12-week research fellowship designed to train and support emerging researchers working on AI alignment, interpretability, governance, and safety. Fellows collaborate with world-class mentors, receive dedicated research management support, and join a vibrant community in Berkeley focused on advancing safe and reliable AI. The program provides the structure, resources, and mentorship needed to produce impactful research and launch long-term careers in AI safety.

MATS mentors are leading researchers from a broad range of AI safety, alignment, governance, interpretability, and security domains. They include academics, industry researchers, and independent experts who guide scholars through research projects, provide feedback, and help shape each scholar’s growth as a researcher. The mentors represent expertise in areas such as:

- Agent foundations and decision theory

- Control & monitoring of AI systems

- Mechanistic and concept-level interpretability

- Capability evaluations and benchmarking

- Cybersecurity, adversarial robustness, and safeguards

- Forecasting, game theory, and national/international governance

- Threat modeling, compliance verification, and compute proliferation

- Model organisms, red-teaming, and deceptive alignment

- Hardware interventions and information security

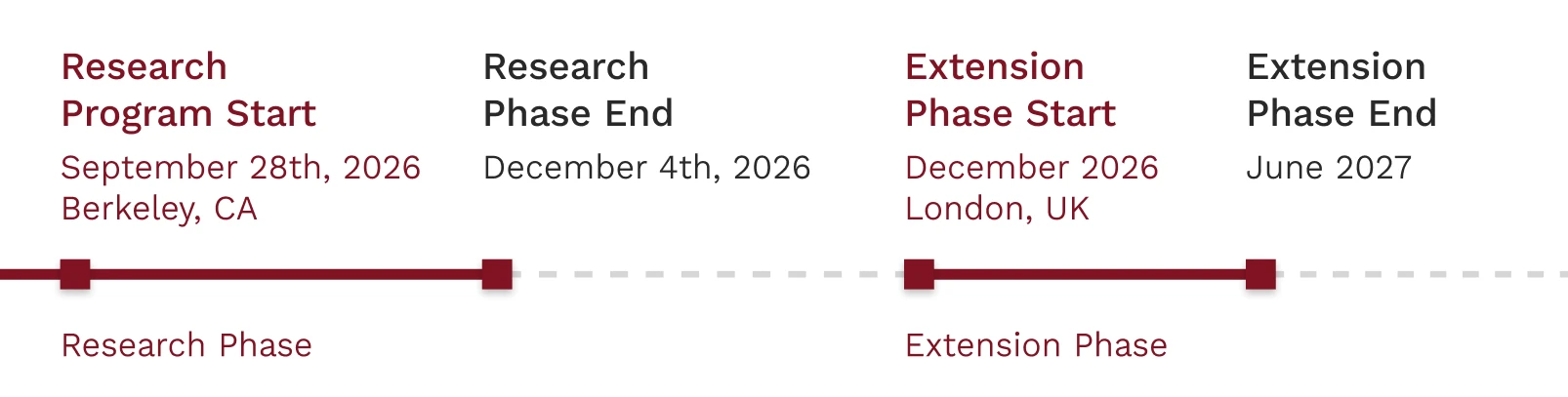

Key dates

Application:

- General application period (December 16th to January 18th)

- Additional evaluation (late January through March)

- Final offers (late March/early April)

The main program will then run from early June to late August, with the extension phase for accepted fellows beginning in September.

MATS accepts applicants from diverse academic and professional backgrounds ranging from machine learning, mathematics, and computer science to policy, economics, physics, and cognitive science. The primary requirements are strong motivation to contribute to AI safety and evidence of technical aptitude or research potential. Prior AI safety experience is helpful but not required.

Applicants submit a general application, applying to various tracks (technical governance, empirical, policy & strategy, theory, and compute governance) and streams within those tracks.

After a centralized review period, applicants who are advanced will then undergo additional evaluations depending on the preferences of the streams they've applied to before doing final interviews and receiving offers.

For more information on how to get into MATS, please look at this page.